Catalog ops

Operations platform — open source, self-hostable

Automate your day‑to‑day operations. Capture human input for the exceptions.

We review the processes you want to automate, identify blockers, and deliver them as workflows with training material. You won't need to adjust them — if something changes, we update them for you.

Connect to

- Shopify

- SharePoint

- Business Central

- HubSpot

- S3-compatible storage

- CSV files

- …or your local accounting software

Who it's for

Runtara is for ops teams who want to move faster, and understand that manual process is expensive.

Processes that span two or more systems — one of which isn't mainstream. A specific local accounting tool. A legacy inventory system. Something nobody else integrates with. Or processes inside a single tool that doesn't have automation, but exposes an API.

01 — Work we've shipped

What we've built

E-commerce

Inventory levels synced between an invoicing tool and Shopify.

Revenue ops

Business Central connected to HubSpot — beyond the standard HubSpot integration.

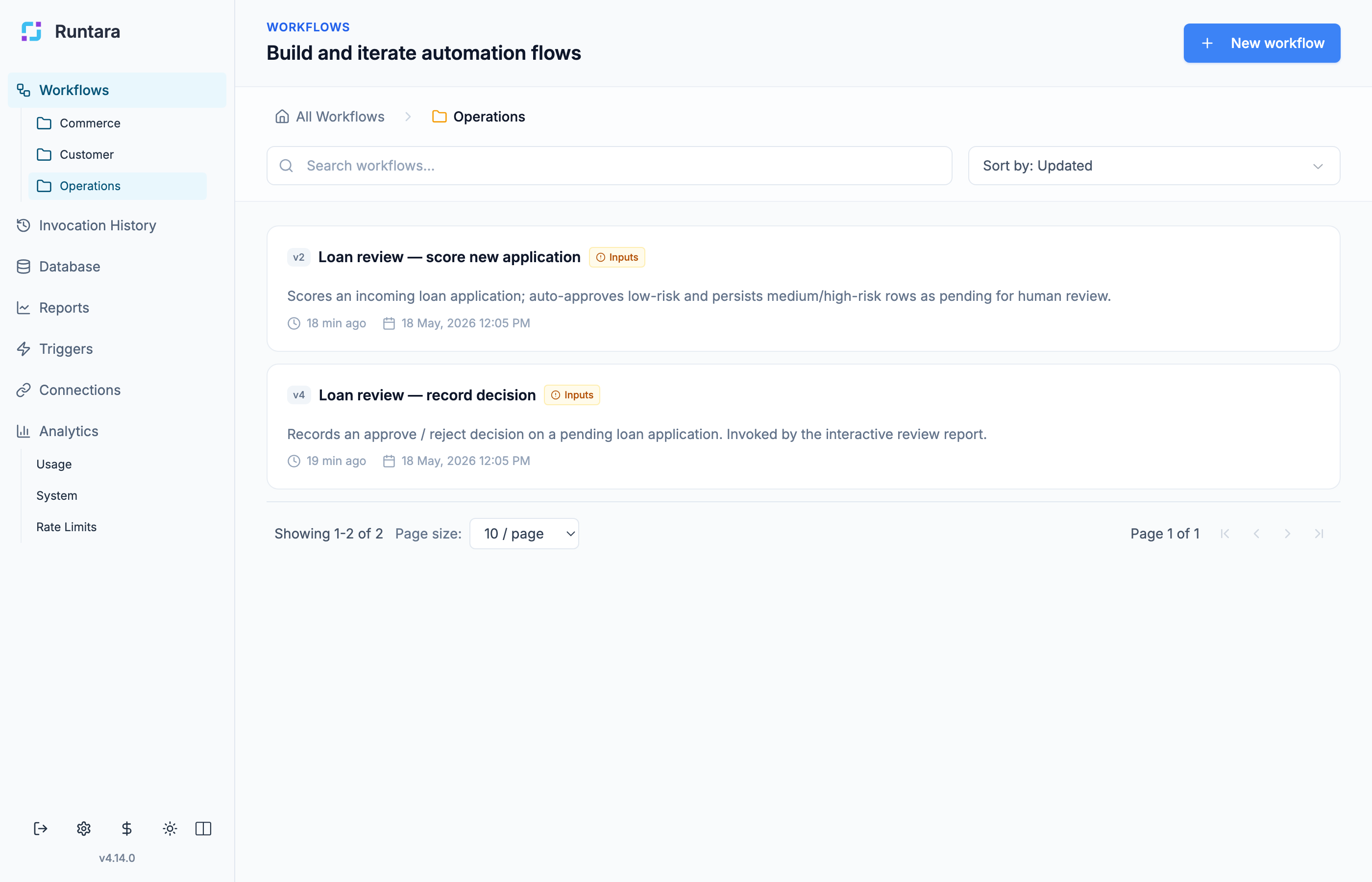

Lending

Automated loan review with human approval.

Sales

Prospect transactional data normalized and classified for faster, more accurate quotes.

Yours

Tell us what process is eating your team's time. We'll tell you if we can automate it.

Book a 30-minute call →02 — Routine

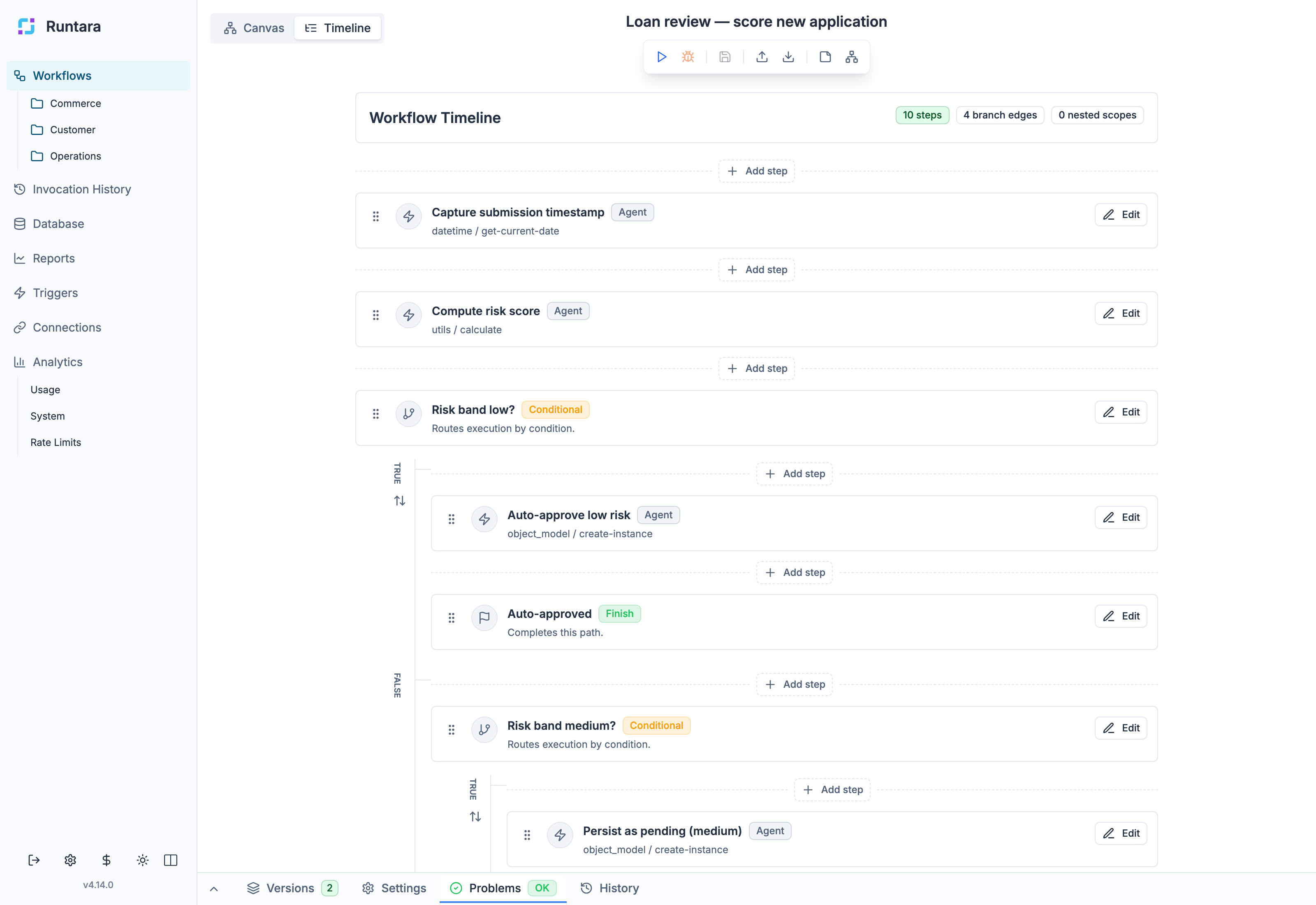

Automate the routine.

Schedule it, trigger it, or run it on demand. Connectors to the systems your team already uses — and the ones nobody else builds for.

- Workflows organized by operational area

- Scheduled, HTTP, manual, and event-driven triggers

- No invocation limits, no per-task pricing

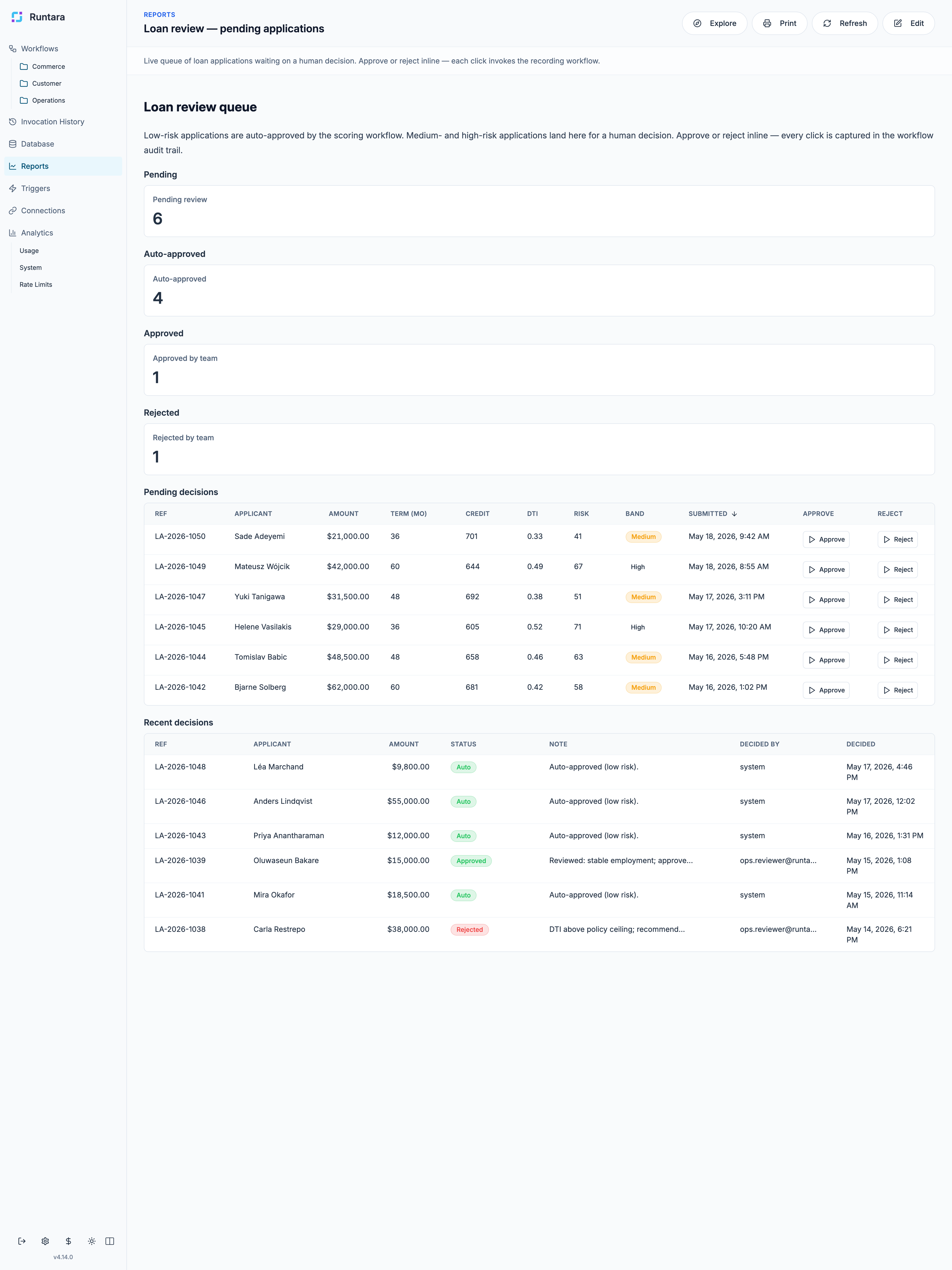

03 — Exceptions

Handle the exceptions.

Some decisions need a person. Adjusting a classification. Approving a loan. Deciding which mapping is right.

Your team handles these in interactive reports — fetching live data, calling workflows, writing back. Same engine, same audit trail.

- Live data from connected systems

- Approvals, edits, classifications, writebacks

- Every action recorded and replayable

04 — The learning loop

Decisions your team makes become rules the workflow remembers.

A classification adjusted today doesn't surface again next week. An approval pattern becomes a rule. The same call doesn't get made twice.

RUN

Workflow runs, hits an exception it can't decide.

REVIEW

Surfaces in a report. Someone on your team decides.

REMEMBER

Decision saved as a rule, mapping, or approval.

REUSE

Next run with the same case: handled automatically.

05 — How we work

We build and maintain your workflows. You don't have to.

Most of our customers never open the workflow editor. They tell us what they want automated, and we keep it running.

You tell us what you want automated. We review the process, identify potential blockers, and flag the adjustments your team would need to make. Up front, before any work starts.

We build the workflows. Delivered as a working set of workflows, with training material on how to use them. Your team learns the part they care about — the reports and decisions, not the wiring.

We maintain them. When an API changes, a vendor updates a schema, or your process shifts — we update the workflow. You can adjust it yourself if you want. Most don't.

06 — Why us

A platform we operate for you.

No invocation limits. No per-task pricing. No "but it's only 10,000 runs." Run what you need to run.

Hosted on your terms. Managed cloud by default, self-hosted on your own infrastructure when residency, sovereignty, or air-gapped operation requires it.

Open source. RUNTARA, our execution engine, is open source. Read it, audit it, run it yourself.

Configured and maintained. We do the workflow building and the upkeep. You handle the parts only you can.

07 — Under the hood

For the technical evaluator who has to bless this.

Three properties that matter when you're putting money, inventory, or regulated flows through us.

ISOLATION

Each customer in their own environment. No shared compute, no shared data plane. Self-hosted available for the deployments that require it.

DURABILITY

Workflows are compiled. They don't change between runs. Same inputs produce the same outputs. Replay is exact.

VISIBILITY

Every run is logged. What ran, when, with what data, what changed. Available to you, available on audit.

OPEN

The platform is open source. Read the code on GitHub. Self-host the engine. The safety claims are auditable, not asserted.

Success of automation depends on the processes you already have.

Book a call. We'll look at yours and tell you whether automation will help — or whether it won't.